Google has released an updated AI model, Gemini 2.5 Flash Image, designed to provide users with more control over image generation and editing. The model, which was anonymously tested on the crowdsourced evaluation platform LMArena under the codename “Nano Banana,” is now integrated into the consumer-facing Gemini app and available to developers through the Gemini API, Google AI Studio, and Vertex AI.

According to statements from Google, the release is a response to user feedback requesting higher-quality images and more powerful creative control than was available in previous versions. The company highlights several key capabilities of the new model.

Core capabilities

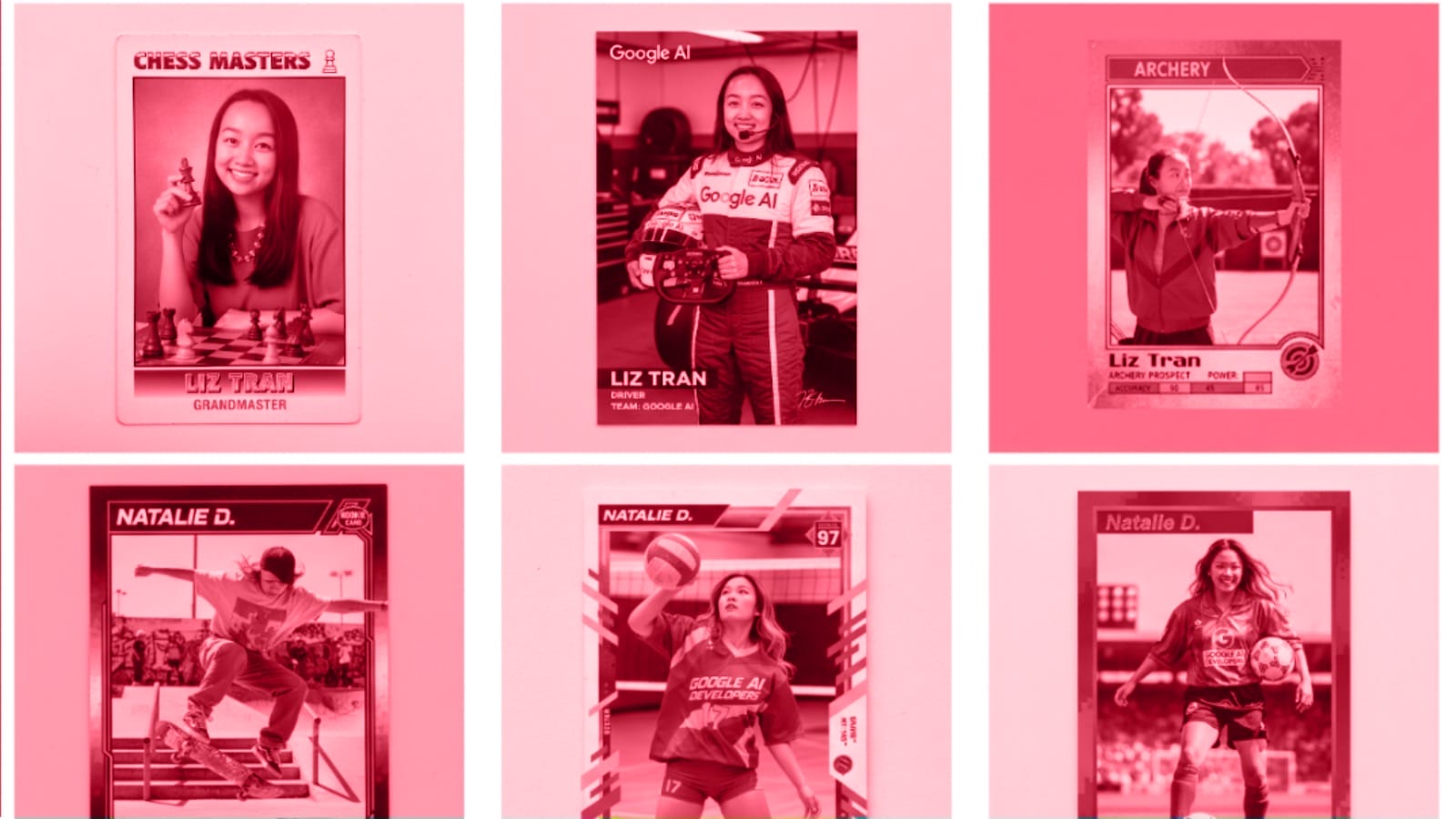

A primary focus of the update is improving the consistency of subjects in images. Google states that the model can maintain the likeness of a person, pet, or object across multiple edits and prompts. This allows users to place the same character in different settings or outfits without distorting their core features, addressing a common challenge in AI image generation.

Other significant features include:

- Prompt-based editing: Users can make specific, localized changes to an image using natural language commands. Examples provided by Google include blurring a background, removing an object, or changing a person’s pose.

- Multi-image fusion: The model can blend elements from multiple source images into a single, cohesive new image. This can be used to combine people and objects into new scenes or apply the style and texture of one image to another.

- Multi-turn editing: Users can perform a series of sequential edits on an image, with the model preserving the context from one step to the next. For example, a user could start with an empty room, add paint to the walls, and then insert furniture in subsequent steps.

- World knowledge: The model leverages the broader knowledge base of the Gemini family, which Google claims allows it to better understand and generate images related to real-world concepts and diagrams.

The release positions Google in a competitive market for generative AI image tools. Reporting from outlets like TechCrunch and VentureBeat frames the update as an effort to compete with offerings from rivals such as OpenAI, Midjourney, and Adobe. Before this release, social media users generated considerable excitement around the “nano-banana” model, praising its ability to follow complex instructions.

For developers and enterprise users, Google has provided specific pricing for the model via its API, set at $0.039 per generated image. The company also announced partnerships with platforms like OpenRouter.ai and fal.ai to broaden developer access.

To address safety concerns and transparency, Google confirms that all images created or edited with the model will include SynthID, an invisible digital watermark to identify them as AI-generated. Images produced in the Gemini app will also feature a visible watermark.

Sources: Google, Google, TechCrunch, VentureBeat