Google Research has released TurboQuant, a new compression algorithm designed to reduce the memory demands of large language models. The company says it can shrink a model’s key-value cache by at least six times and speed up a core processing step called attention computation by up to eight times, all without retraining the model or reducing its accuracy.

To understand why this matters, it helps to know what a key-value cache is. When an AI model processes a long piece of text, it stores information about each word as a list of numbers called a vector. These vectors pile up in high-speed memory, and for long documents or conversations, this can fill up the available memory quickly, slowing the model down. Compression techniques can shrink these vectors, but most existing methods introduce their own overhead: they require storing extra reference numbers alongside the compressed data, partially cancelling out the gains.

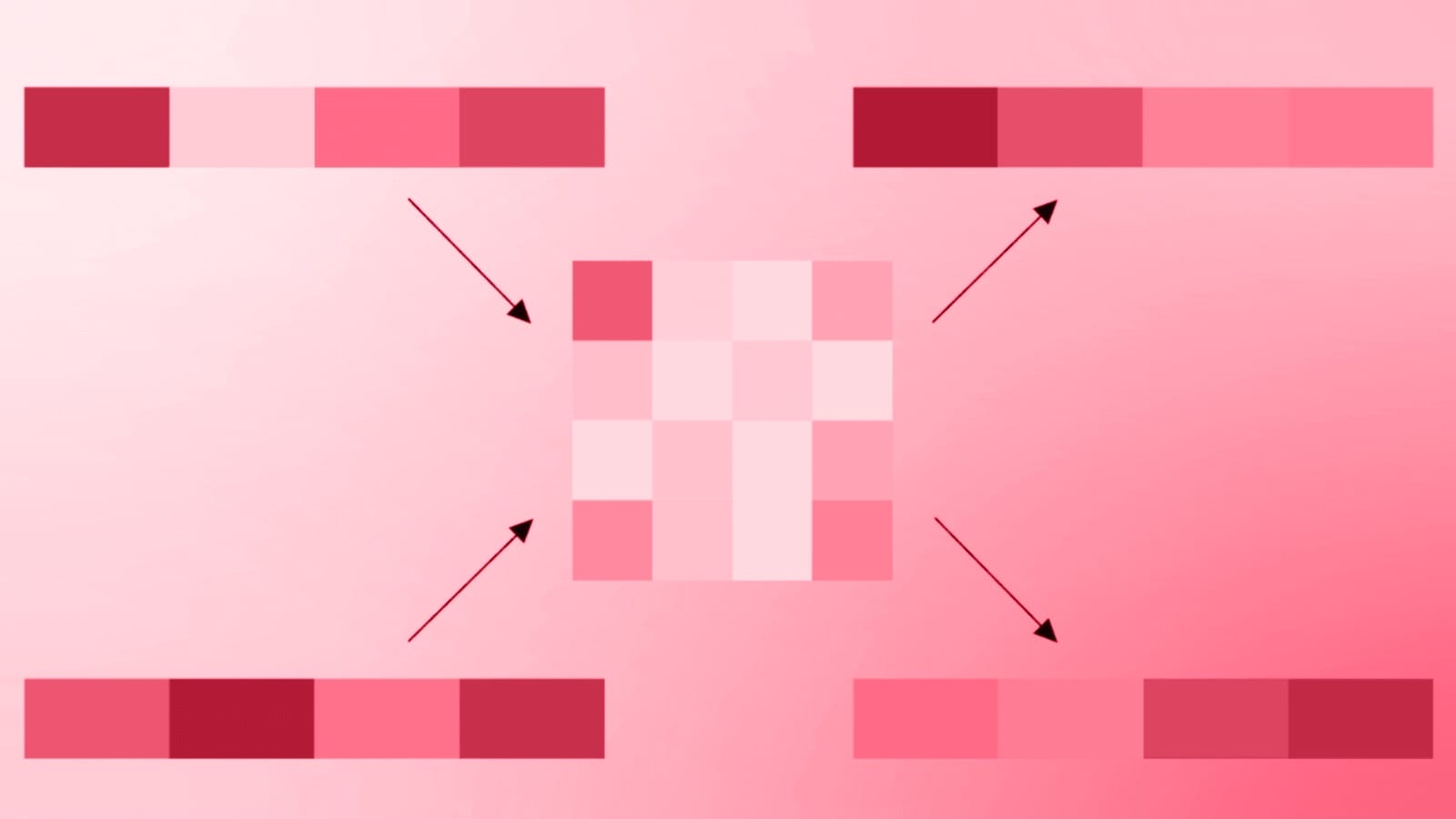

TurboQuant addresses this with a two-stage approach. The first stage uses a companion method called PolarQuant. Instead of describing a vector using standard coordinates, PolarQuant converts it into polar coordinates, the same way a navigator might describe a destination by distance and direction rather than grid references. After a random mathematical rotation of the data, the angles in this polar representation become predictable enough that the system no longer needs to store those expensive reference numbers. The second stage applies a method called Quantized Johnson-Lindenstrauss (QJL), which reduces any remaining error to a single bit per number, acting as a mathematical error-checker that prevents small inaccuracies from accumulating.

Google Research tested all three algorithms on standard benchmarks for long-context tasks, including a test called Needle in a Haystack, which checks whether a model can locate a single sentence buried in 100,000 words. TurboQuant achieved perfect recall scores while reducing memory use by at least six times. On NVIDIA H100 hardware, the four-bit version of TurboQuant delivered an eight-times speed increase in attention computation compared to the standard 32-bit approach.

The algorithms are available publicly at no cost and require no additional training, meaning organizations can apply them to existing models without rebuilding them. According to Google Research, the techniques work across multiple open-source models including Llama, Mistral, and Gemma.

Community members have already begun testing the algorithms independently. One early benchmark, reported on X, found that a 2.5-bit version of TurboQuant reduced the key-value cache by nearly five times on a third-party model with no measurable accuracy loss.

Following the release, stock prices for memory chip manufacturers including Micron and Western Digital declined, reflecting market speculation that software-driven compression could reduce demand for physical memory hardware.

Sources: Google Research Blog, VentureBeat